在Linux7上配置VNC和以往方法不同(也是由于Linux7上的服务管理方式的变化造成的)。

安装vnc的时候,需要配置yum源,我一般选择配置本地yum源,本地yum源有两种主要方式:

1,使用vbox的共享目录

2,使用虚拟出来的光驱

配制方法跟Linux7以前的版本(Linux6和Linux5)一样,这里不赘述。

.

查看本地yum源里面可以安装的vnc包:

[root@lunar1 yum.repos.d]# yum list|grep vnc

gtk-vnc2.x86_64 0.5.2-7.el7 @anaconda

gvnc.x86_64 0.5.2-7.el7 @anaconda

libvncserver.x86_64 0.9.9-9.el7_0.1 @anaconda

gtk-vnc2.i686 0.5.2-7.el7 OEL72_stage

gvnc.i686 0.5.2-7.el7 OEL72_stage

libvncserver.i686 0.9.9-9.el7_0.1 OEL72_stage

tigervnc.x86_64 1.3.1-3.el7 CentOS7_stage

tigervnc-icons.noarch 1.3.1-3.el7 CentOS7_stage

tigervnc-license.noarch 1.3.1-3.el7 CentOS7_stage

tigervnc-server.x86_64 1.3.1-3.el7 CentOS7_stage

tigervnc-server-minimal.x86_64 1.3.1-3.el7 CentOS7_stage

[root@lunar1 yum.repos.d]#

我一般选择tigervnc。

[root@lunar1 yum.repos.d]# yum install tigervnc-server.x86_64

已加载插件:fastestmirror, langpacks

Loading mirror speeds from cached hostfile

正在解决依赖关系

--> 正在检查事务

---> 软件包 tigervnc-server.x86_64.0.1.3.1-3.el7 将被 安装

--> 正在处理依赖关系 tigervnc-server-minimal,它被软件包 tigervnc-server-1.3.1-3.el7.x86_64 需要

--> 正在检查事务

---> 软件包 tigervnc-server-minimal.x86_64.0.1.3.1-3.el7 将被 安装

--> 正在处理依赖关系 tigervnc-license,它被软件包 tigervnc-server-minimal-1.3.1-3.el7.x86_64 需要

--> 正在检查事务

---> 软件包 tigervnc-license.noarch.0.1.3.1-3.el7 将被 安装

--> 解决依赖关系完成

依赖关系解决

================================================================================================================================================================================================

Package 架构 版本 源 大小

================================================================================================================================================================================================

正在安装:

tigervnc-server x86_64 1.3.1-3.el7 CentOS7_stage 202 k

为依赖而安装:

tigervnc-license noarch 1.3.1-3.el7 CentOS7_stage 25 k

tigervnc-server-minimal x86_64 1.3.1-3.el7 CentOS7_stage 1.0 M

事务概要

================================================================================================================================================================================================

安装 1 软件包 (+2 依赖软件包)

总下载量:1.2 M

安装大小:3.0 M

Is this ok [y/d/N]: y

Downloading packages:

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

总计 7.9 MB/s | 1.2 MB 00:00:00

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

正在安装 : tigervnc-license-1.3.1-3.el7.noarch 1/3

正在安装 : tigervnc-server-minimal-1.3.1-3.el7.x86_64 2/3

正在安装 : tigervnc-server-1.3.1-3.el7.x86_64 3/3

验证中 : tigervnc-license-1.3.1-3.el7.noarch 1/3

验证中 : tigervnc-server-minimal-1.3.1-3.el7.x86_64 2/3

验证中 : tigervnc-server-1.3.1-3.el7.x86_64 3/3

已安装:

tigervnc-server.x86_64 0:1.3.1-3.el7

作为依赖被安装:

tigervnc-license.noarch 0:1.3.1-3.el7 tigervnc-server-minimal.x86_64 0:1.3.1-3.el7

完毕!

[root@lunar1 yum.repos.d]#

Linux7之前的系统,如果安装vnc一般都需要使用vncserver命令来设置口令,然后配置/etc/sysconfig/vncservers文件。

在Linux7中,仍然还存在这个文件,不过其内容只有一行:

[root@lunar1 ~]# cat /etc/sysconfig/vncservers

# THIS FILE HAS BEEN REPLACED BY /lib/systemd/system/vncserver@.service

[root@lunar1 ~]#

这里看到/etc/sysconfig/vncservers的内容实际上是告诉我们:vnc已经被换为systemd管理的服务了。

现在,我们来查看这个文件:

[root@lunar1 ~]# cat /lib/systemd/system/vncserver@.service

# The vncserver service unit file

#

# Quick HowTo:

# 1. Copy this file to /etc/systemd/system/vncserver@.service

# 2. Edit <USER> and vncserver parameters appropriately

# ("runuser -l <USER> -c /usr/bin/vncserver %i -arg1 -arg2")

# 3. Run `systemctl daemon-reload`

# 4. Run `systemctl enable vncserver@:<display>.service`

#

# DO NOT RUN THIS SERVICE if your local area network is

# untrusted! For a secure way of using VNC, you should

# limit connections to the local host and then tunnel from

# the machine you want to view VNC on (host A) to the machine

# whose VNC output you want to view (host B)

#

# [user@hostA ~]$ ssh -v -C -L 590N:localhost:590M hostB

#

# this will open a connection on port 590N of your hostA to hostB's port 590M

# (in fact, it ssh-connects to hostB and then connects to localhost (on hostB).

# See the ssh man page for details on port forwarding)

#

# You can then point a VNC client on hostA at vncdisplay N of localhost and with

# the help of ssh, you end up seeing what hostB makes available on port 590M

#

# Use "-nolisten tcp" to prevent X connections to your VNC server via TCP.

#

# Use "-localhost" to prevent remote VNC clients connecting except when

# doing so through a secure tunnel. See the "-via" option in the

# `man vncviewer' manual page.

[Unit]

Description=Remote desktop service (VNC)

After=syslog.target network.target

[Service]

Type=forking

# Clean any existing files in /tmp/.X11-unix environment

ExecStartPre=/bin/sh -c '/usr/bin/vncserver -kill %i > /dev/null 2>&1 || :'

ExecStart=/usr/sbin/runuser -l <USER> -c "/usr/bin/vncserver %i"

PIDFile=/home/<USER>/.vnc/%H%i.pid

ExecStop=/bin/sh -c '/usr/bin/vncserver -kill %i > /dev/null 2>&1 || :'

[Install]

WantedBy=multi-user.target

[root@lunar1 ~]#

请注意,上面文件中的重要提示,这段提示清晰的告诉我们该如何配置vnc:

# Quick HowTo:

# 1. Copy this file to /etc/systemd/system/vncserver@.service

# 2. Edit <USER> and vncserver parameters appropriately

# ("runuser -l <USER> -c /usr/bin/vncserver %i -arg1 -arg2")

# 3. Run `systemctl daemon-reload`

# 4. Run `systemctl enable vncserver@:<display>.service`

好吧,按照提示,我们首先配置root用户的vnc服务启动配置文件:

[root@lunar ~]# cp /lib/systemd/system/vncserver@.service /lib/systemd/system/vncserver@:1.service

[root@lunar ~]# ll /lib/systemd/system/vncserver@*

-rw-r--r-- 1 root root 1744 Oct 8 20:05 /lib/systemd/system/vncserver@:1.service

-rw-r--r-- 1 root root 1744 May 7 2014 /lib/systemd/system/vncserver@.service

[root@lunar ~]#

然后,按照上面文件中(/lib/systemd/system/vncserver@.service)的配置方式

修改前:

[Unit]

Description=Remote desktop service (VNC)

After=syslog.target network.target

[Service]

Type=forking

# Clean any existing files in /tmp/.X11-unix environment

ExecStartPre=/bin/sh -c '/usr/bin/vncserver -kill %i > /dev/null 2>&1 || :'

ExecStart=/sbin/runuser -l <USER> -c "/usr/bin/vncserver %i"

PIDFile=/home/<USER>/.vnc/%H%i.pid

ExecStop=/bin/sh -c '/usr/bin/vncserver -kill %i > /dev/null 2>&1 || :'

[Install]

WantedBy=multi-user.target

修改后:

[Unit]

Description=Remote desktop service (VNC)

After=syslog.target network.target

[Service]

Type=simple

# Clean any existing files in /tmp/.X11-unix environment

ExecStartPre=/bin/sh -c '/usr/bin/vncserver -kill :1 > /dev/null 2>&1 || :'

ExecStart=/sbin/runuser -l root -c "/usr/bin/vncserver :1"

PIDFile=/root/.vnc/%H:1.pid

ExecStop=/bin/sh -c '/usr/bin/vncserver -kill :1 > /dev/null 2>&1 || :'

[Install]

WantedBy=multi-user.target

然后,重新加载systemd的配置:

[root@lunar1 ~]# systemctl daemon-reload

[root@lunar1 ~]#

然后,我们设置为自动启动:

[root@lunar1 ~]# systemctl enable vncserver@:1.service

Created symlink from /etc/systemd/system/multi-user.target.wants/vncserver@:1.service to /usr/lib/systemd/system/vncserver@:1.service.

[root@lunar1 ~]# ll /etc/systemd/system/multi-user.target.wants/vncserver@:1.service

lrwxrwxrwx 1 root root 44 1月 17 00:29 /etc/systemd/system/multi-user.target.wants/vncserver@:1.service -> /usr/lib/systemd/system/vncserver@:1.service

[root@lunar1 ~]#

启动vnc服务:

[root@lunar ~]# systemctl enable vncserver@:1.service

[root@lunar ~]# systemctl start vncserver@:1.service

[root@lunar ~]# systemctl status vncserver@:1.service

vncserver@:1.service - Remote desktop service (VNC)

Loaded: loaded (/usr/lib/systemd/system/vncserver@:1.service; enabled)

Active: active (running) since Thu 2015-10-08 20:39:00 CST; 5s ago

Process: 21624 ExecStop=/bin/sh -c /usr/bin/vncserver -kill :1 > /dev/null 2>&1 || : (code=exited, status=0/SUCCESS)

Process: 21634 ExecStartPre=/bin/sh -c /usr/bin/vncserver -kill :1 > /dev/null 2>&1 || : (code=exited, status=0/SUCCESS)

Main PID: 21668 (Xvnc)

CGroup: /system.slice/system-vncserver.slice/vncserver@:1.service

‣ 21668 /usr/bin/Xvnc :1 -desktop lunar:1 (root) -auth /root/.Xauthority -geometry 1024x768 -rfbwait 30000 -rfbauth /root/.vnc/passwd -rfbport 5901 -fp catalogue:/etc/X11/fontpat...

Oct 08 20:39:00 lunar systemd[1]: Starting Remote desktop service (VNC)...

Oct 08 20:39:00 lunar systemd[1]: Started Remote desktop service (VNC).

[root@lunar ~]#

在Linux7中,VNC使用590x开始的端口号:

只配置IPv4的情况:

[root@lunar ~]# lsof -i:5901

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

Xvnc 21668 root 7u IPv4 91713 0t0 TCP *:5901 (LISTEN)

[root@lunar ~]#

[root@lunar ~]# netstat -lnt | grep 590*

tcp 0 0 0.0.0.0:5901 0.0.0.0:* LISTEN

[root@lunar ~]#

[root@lunar ~]# ss -lntp|grep 590

LISTEN 0 5 *:5901 *:* users:(("Xvnc",21668,7))

[root@lunar ~]#

配置了IPv4和IPv6的情况:

[root@lunar1 ~]# lsof -i:5901

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

Xvnc 7224 root 8u IPv4 157461 0t0 TCP *:5901 (LISTEN)

Xvnc 7224 root 9u IPv6 157462 0t0 TCP *:5901 (LISTEN)

[root@lunar1 ~]#

[root@lunar1 ~]#

[root@lunar1 ~]# lsof -i:5901

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

Xvnc 7224 root 8u IPv4 157461 0t0 TCP *:5901 (LISTEN)

Xvnc 7224 root 9u IPv6 157462 0t0 TCP *:5901 (LISTEN)

[root@lunar1 ~]# netstat -lnt | grep 590*

tcp 0 0 0.0.0.0:5901 0.0.0.0:* LISTEN

tcp6 0 0 :::5901 :::* LISTEN

[root@lunar1 ~]#

[root@lunar1 ~]# ss -lntp|grep 590

LISTEN 0 5 *:5901 *:* users:(("Xvnc",pid=7224,fd=8))

LISTEN 0 5 :::5901 :::* users:(("Xvnc",pid=7224,fd=9))

[root@lunar1 ~]#

[root@lunar1 ~]# lsof -i:5901

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

Xvnc 6232 root 8u IPv4 154443 0t0 TCP *:5901 (LISTEN)

Xvnc 6232 root 9u IPv6 154444 0t0 TCP *:5901 (LISTEN)

[root@lunar1 ~]# systemctl stop vncserver@:1.service

[root@lunar1 ~]# lsof -i:5901

[root@lunar1 ~]# systemctl status vncserver@:1.service

● vncserver@:1.service - Remote desktop service (VNC)

Loaded: loaded (/usr/lib/systemd/system/vncserver@:1.service; enabled; vendor preset: disabled)

Active: inactive (dead) since 日 2016-01-17 00:49:12 CST; 25s ago

Process: 7177 ExecStop=/bin/sh -c /usr/bin/vncserver -kill :1 > /dev/null 2>&1 || : (code=exited, status=0/SUCCESS)

Process: 6232 ExecStart=/usr/sbin/runuser -l root -c /usr/bin/vncserver :1 (code=exited, status=0/SUCCESS)

Process: 6197 ExecStartPre=/bin/sh -c /usr/bin/vncserver -kill :1 > /dev/null 2>&1 || : (code=exited, status=0/SUCCESS)

Main PID: 6232 (code=exited, status=0/SUCCESS)

1月 17 00:47:54 lunar1 systemd[1]: Starting Remote desktop service (VNC)...

1月 17 00:47:54 lunar1 systemd[1]: Started Remote desktop service (VNC).

1月 17 00:49:12 lunar1 systemd[1]: Stopping Remote desktop service (VNC)...

1月 17 00:49:12 lunar1 systemd[1]: Stopped Remote desktop service (VNC).

[root@lunar1 ~]# systemctl start vncserver@:1.service

[root@lunar1 ~]# systemctl status vncserver@:1.service

● vncserver@:1.service - Remote desktop service (VNC)

Loaded: loaded (/usr/lib/systemd/system/vncserver@:1.service; enabled; vendor preset: disabled)

Active: active (running) since 日 2016-01-17 00:49:43 CST; 1s ago

Process: 7177 ExecStop=/bin/sh -c /usr/bin/vncserver -kill :1 > /dev/null 2>&1 || : (code=exited, status=0/SUCCESS)

Process: 7190 ExecStartPre=/bin/sh -c /usr/bin/vncserver -kill :1 > /dev/null 2>&1 || : (code=exited, status=0/SUCCESS)

Main PID: 7193 (runuser)

CGroup: /system.slice/system-vncserver.slice/vncserver@:1.service

‣ 7193 /usr/sbin/runuser -l root -c /usr/bin/vncserver :1

1月 17 00:49:43 lunar1 systemd[1]: Starting Remote desktop service (VNC)...

1月 17 00:49:43 lunar1 systemd[1]: Started Remote desktop service (VNC).

[root@lunar1 ~]# lsof -i:5901

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

Xvnc 7224 root 8u IPv4 157461 0t0 TCP *:5901 (LISTEN)

Xvnc 7224 root 9u IPv6 157462 0t0 TCP *:5901 (LISTEN)

[root@lunar1 ~]#

设置vncserver的密码:vncpasswd root

最终root用户的vnc的配置文件:

[Unit]

Description=Remote desktop service (VNC)

After=syslog.target network.target

[Service]

#Type=forking

Type=simple

# Clean any existing files in /tmp/.X11-unix environment

ExecStartPre=/bin/sh -c '/usr/bin/vncserver -kill :1 > /dev/null 2>&1 || :'

ExecStart=/sbin/runuser -l root -c "/usr/bin/vncserver :1"

#PIDFile=/home/root/.vnc/%H:1.pid

PIDFile=/root/.vnc/%H:1.pid

ExecStop=/bin/sh -c '/usr/bin/vncserver -kill :1 > /dev/null 2>&1 || :'

[Install]

WantedBy=multi-user.target

然后使用同样的方法配置grid用户和oracle用户即可。

.

有时候vnc启动会报错,例如:

[root@lunar ~]# systemctl restart vncserver@:1.service

Job for vncserver@:1.service failed. See 'systemctl status vncserver@:1.service' and 'journalctl -xn' for details.

[root@lunar ~]# systemctl status vncserver@:1.service

vncserver@:1.service - Remote desktop service (VNC)

Loaded: loaded (/usr/lib/systemd/system/vncserver@:1.service; enabled)

Active: failed (Result: exit-code) since Thu 2015-10-08 20:18:22 CST; 17s ago

Process: 19175 ExecStart=/sbin/runuser -l <USER> -c /usr/bin/vncserver %i (code=exited, status=1/FAILURE)

Process: 19171 ExecStartPre=/bin/sh -c /usr/bin/vncserver -kill %i > /dev/null 2>&1 || : (code=exited, status=0/SUCCESS)

Oct 08 20:18:22 lunar systemd[1]: Starting Remote desktop service (VNC)...

Oct 08 20:18:22 lunar runuser[19175]: runuser: user <USER> does not exist

Oct 08 20:18:22 lunar systemd[1]: vncserver@:1.service: control process exited, code=exited status=1

Oct 08 20:18:22 lunar systemd[1]: Failed to start Remote desktop service (VNC).

Oct 08 20:18:22 lunar systemd[1]: Unit vncserver@:1.service entered failed state.

[root@lunar ~]#

处理思路:

1,查看日志(/var/log/messages)或者使用Linux7中新引入的journalctl查看(参见前面的blog)

2,通常的问题有几类:

(1)配置文件有问题。

(2)/tmp/下的临时文件因为某种原因,系统没有自动清理

(3)ghome造成的一些问题,比如修改中文语言或者英文语言后,容易出现问题

.

解决的方法都很简单:

1,确保为正确配置

2,删除临时文件

3,根据配置文件的内容手工执行,并发现问题

其中第三个手工执行,我发现是杀手锏,几乎都可以解决。

如果上述都还是不行(目前为止,我还没遇到过),很简单,重装vnc,O(∩_∩)O哈哈~

下面是手工处理的例子,手工处理的方法是参考配置文件内容,我这里是以oracle用户的配置文件为例:

[root@lunar2 ~]# cat /usr/lib/systemd/system/vncserver@:3.service

# The vncserver service unit file

#

# Quick HowTo:

# 1. Copy this file to /etc/systemd/system/vncserver@.service

# 2. Edit oracle and vncserver parameters appropriately

# ("runuser -l oracle -c /usr/bin/vncserver :3 -arg1 -arg2")

# 3. Run `systemctl daemon-reload`

# 4. Run `systemctl enable vncserver@:<display>.service`

#

# DO NOT RUN THIS SERVICE if your local area network is

# untrusted! For a secure way of using VNC, you should

# limit connections to the local host and then tunnel from

# the machine you want to view VNC on (host A) to the machine

# whose VNC output you want to view (host B)

#

# [user@hostA ~]$ ssh -v -C -L 590N:localhost:590M hostB

#

# this will open a connection on port 590N of your hostA to hostB's port 590M

# (in fact, it ssh-connects to hostB and then connects to localhost (on hostB).

# See the ssh man page for details on port forwarding)

#

# You can then point a VNC client on hostA at vncdisplay N of localhost and with

# the help of ssh, you end up seeing what hostB makes available on port 590M

#

# Use "-nolisten tcp" to prevent X connections to your VNC server via TCP.

#

# Use "-localhost" to prevent remote VNC clients connecting except when

# doing so through a secure tunnel. See the "-via" option in the

# `man vncviewer' manual page.

[Unit]

Description=Remote desktop service (VNC)

After=syslog.target network.target

[Service]

Type=simple

# Clean any existing files in /tmp/.X11-unix environment

ExecStartPre=/bin/sh -c '/usr/bin/vncserver -kill :3 > /dev/null 2>&1 || :'

ExecStart=/usr/sbin/runuser -l oracle -c "/usr/bin/vncserver :3"

PIDFile=/home/oracle/.vnc/%H:3.pid

ExecStop=/bin/sh -c '/usr/bin/vncserver -kill :3 > /dev/null 2>&1 || :'

[Install]

WantedBy=multi-user.target

[root@lunar2 ~]#

按照上述手工kill:

[root@lunar2 ~]# /bin/sh -c '/usr/bin/vncserver -kill :3'

Can't find file /root/.vnc/lunar2.oracle.com:3.pid

You'll have to kill the Xvnc process manually

[root@lunar2 ~]#

检查临时文件:

[root@lunar2 ~]# ll /tmp/.X11-unix

/tmp/.X11-unix:

total 0

srwxrwxrwx 1 root root 0 Jan 23 21:33 X1

srwxrwxrwx 1 grid oinstall 0 Jan 23 21:33 X2

srwxrwxrwx 1 oracle oinstall 0 Jan 23 21:33 X3

[root@lunar2 ~]#

其中X3就是oracle用户的临时文件,删除该临时文件:

[root@lunar2 ~]# rm -rf /tmp/.X11-unix/X3

[root@lunar2 ~]#

重新启动试试看:

[root@lunar2 ~]# /usr/sbin/runuser -l oracle -c "/usr/bin/vncserver :3"

Warning: lunar2.oracle.com:3 is taken because of /tmp/.X3-lock

Remove this file if there is no X server lunar2.oracle.com:3

A VNC server is already running as :3

[root@lunar2 ~]#

提示还有一个文件没有清理,继续手工删除:

[root@lunar2 ~]# rm -rf /tmp/.X3-lock

[root@lunar2 ~]#

再次手工启动:

[root@lunar2 ~]# /usr/sbin/runuser -l oracle -c "/usr/bin/vncserver :3"

New 'lunar2.oracle.com:3 (oracle)' desktop is lunar2.oracle.com:3

Starting applications specified in /home/oracle/.vnc/xstartup

Log file is /home/oracle/.vnc/lunar2.oracle.com:3.log

[root@lunar2 ~]#

我们看到已经启动了,当然,这时候使用systemctl有时候可能发现还是Active: failed状态

但是不要紧,你使用vnc登录试试就知道了,我感觉这是bug,或者哪里没有将状态同步到systemctl中。

也或许是ghome本身容易有问题。

.

总结:Linux7 vnc常用管理命令

systemctl daemon-reload

systemctl enable vncserver@:1.service

systemctl status vncserver@:1.service

systemctl start vncserver@:1.service

systemctl status vncserver@:1.service

systemctl stop vncserver@:1.service

systemctl status vncserver@:1.service

systemctl start vncserver@:1.service

systemctl status vncserver@:1.service

设置vncserver的密码:

vncpasswd root

vncpasswd grid

vncpasswd oracle

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列–1-简介

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列-2-修改主机名和hostnamectl工具的使用

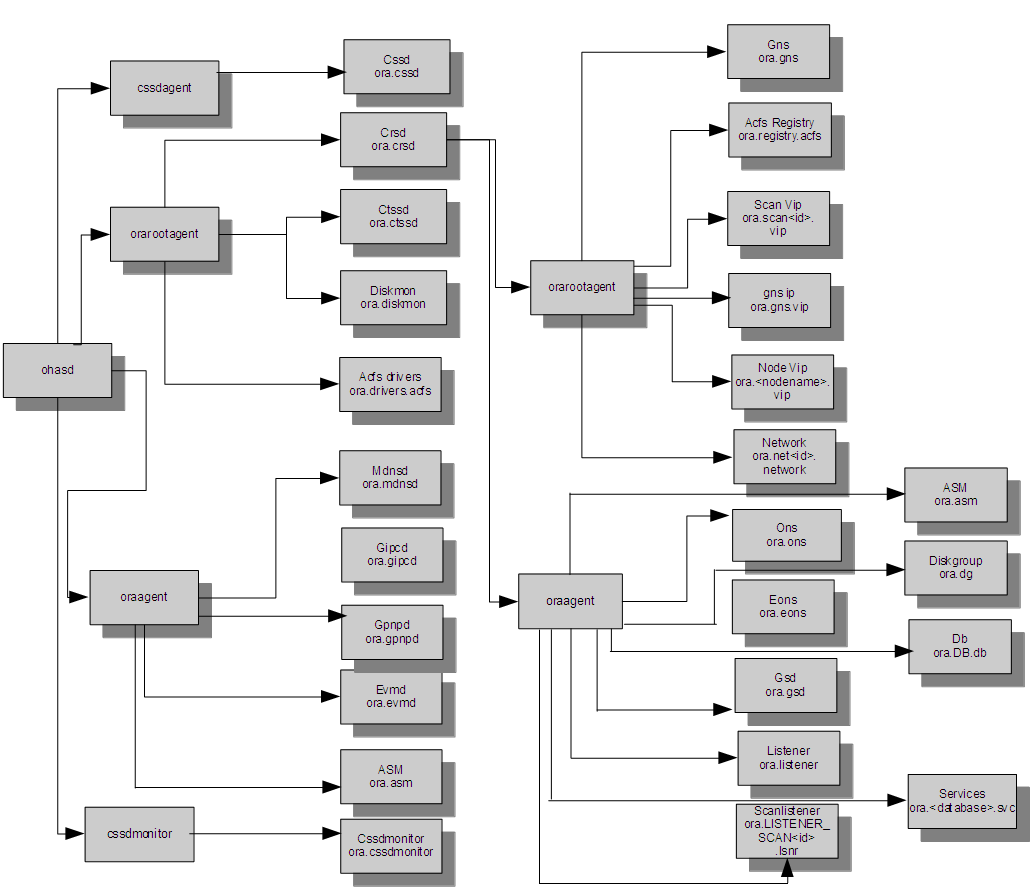

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列–3-systemd(d.bin和ohasd守护进程)

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列–4-target(图形界面和字符界面)

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列–5-防火墙

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列–6-开机自动启动或者禁用服务

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列-7-网络管理之添加网

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列-7-网络管理之修改IP地址

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列-7-网络管理之修改网络接口名

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列-8-在Linux7上安装11.2 RAC和12.1 RAC需要禁用哪些服务

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列-9-Linux 7.2上的virbr0设备

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列-10-ABRT-系统启动后自动检查和报告错误

Linux7(CentOS,RHEL,OEL)和Oracle RAC环境系列-11-配置VNC和常见问题处理